Latency vs Throughput: Key Differences Explained

Latency vs Throughput: Key Differences Explained

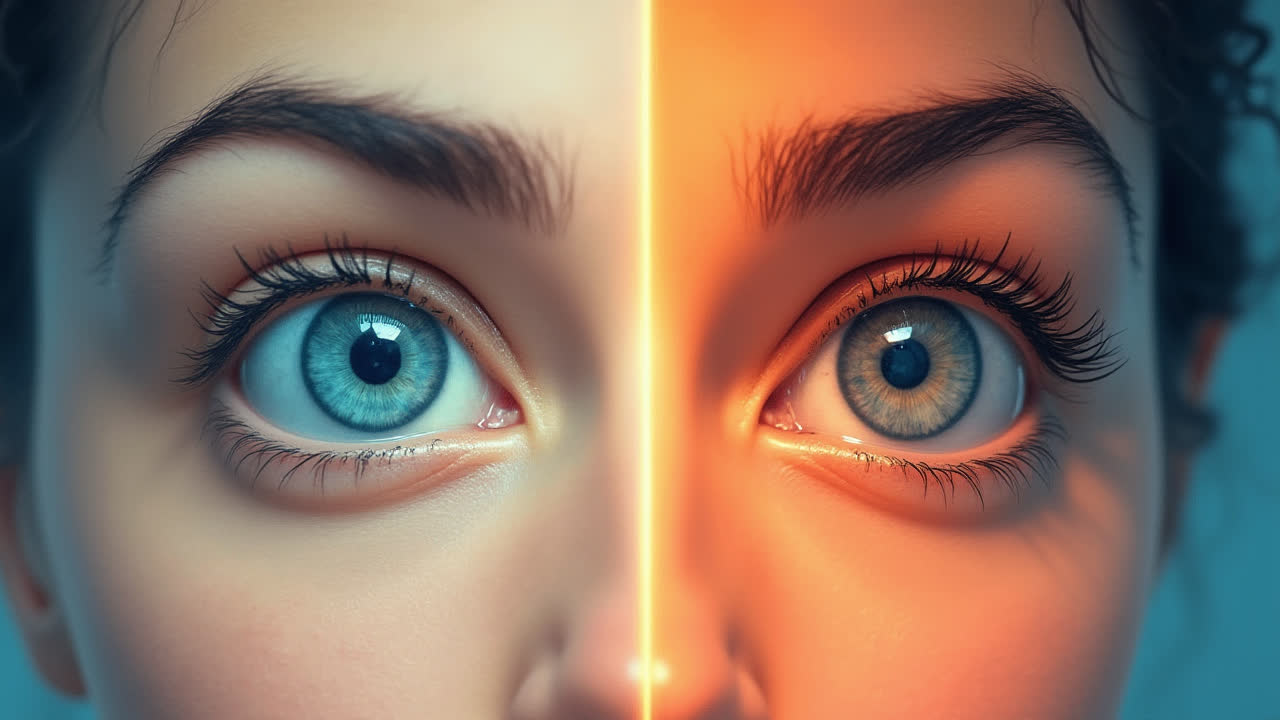

When it comes to understanding network and system performance, latency and throughput are two fundamental metrics that often confuse many people. In my experience working with network systems, I've found that grasping these concepts is crucial for optimizing performance and troubleshooting issues.

Let me share with you what I've learned about these two essential metrics. Why do these terms matter? Well, imagine you're waiting for a webpage to load—that's latency in action. But if you're downloading multiple files simultaneously, you're dealing with throughput. Both affect your online experience, but in very different ways.

What is Latency?

Latency is essentially the delay or waiting time in a system. Think of it as the time it takes for your message to reach its destination—kind of like the delay when you're having a conversation over satellite phone. When I first started working with networks, my mentor explained it this way: "Latency is like the time between knocking on a door and hearing someone answer."

In networking terms, latency measures the round-trip time for data to travel from source to destination and back again. It's usually measured in milliseconds (ms), and trust me, those milliseconds can feel like hours when you're trying to execute a critical database query or playing online games!

Real-life example: I once worked on a project where we were monitoring financial transactions. Even a 50ms increase in latency could cost the client thousands in lost trading opportunities. That's when the importance of latency really hit home for me.

Understanding Throughput

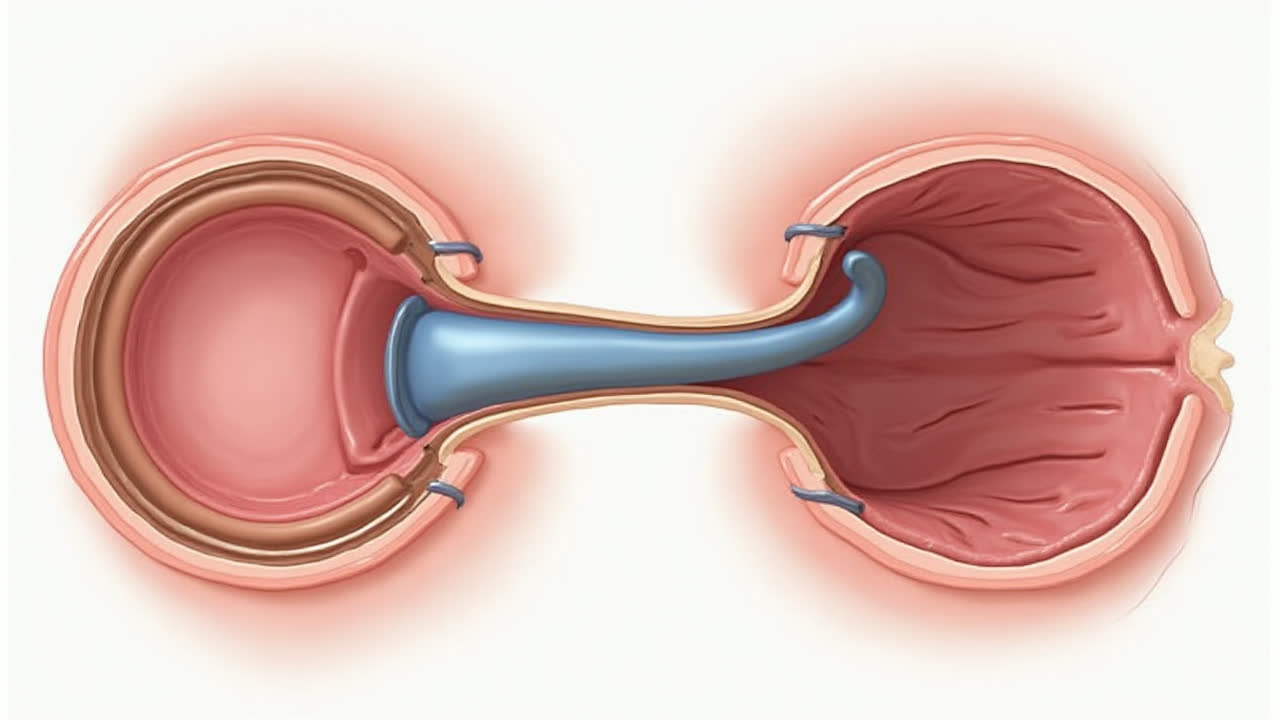

Now, let's talk about throughput. If latency is about speed, throughput is about capacity. It's the amount of data that can be transmitted over a network in a given time period, typically measured in bits per second (bps), kilobits per second (Kbps), or megabits per second (Mbps).

I like to think of throughput as the width of a highway—a wider highway can accommodate more cars at the same time. Similarly, higher throughput means more data can flow through your network simultaneously. But here's the catch: just because a highway is wide doesn't mean cars travel faster on it, right?

A common misconception I encounter is people thinking higher bandwidth automatically means lower latency. That's not necessarily true! You can have a high-throughput connection with terrible latency, or vice versa.

Latency vs Throughput Comparison Table

| Aspect | Latency | Throughput |

|---|---|---|

| Definition | Delay from input to output | Amount of data transferred per unit time |

| Measurement Unit | Milliseconds (ms) | Bits per second (bps) |

| What It Represents | Response time/delay | Data transfer capacity |

| Impact on User Experience | Affects responsiveness | Affects download/upload speeds |

| Key Factors | Distance, network hops, processing time | Bandwidth, congestion, protocol efficiency |

| Optimization Focus | Reducing delay | Increasing capacity |

| Analogy | Time to start delivery | Size of delivery truck |

| Critical For | Real-time applications | Bulk data transfers |

Real-World Applications and Examples

Let me share some scenarios where understanding these metrics really matters:

Gaming and Real-Time Applications

As a casual gamer myself, I can tell you that latency is crucial in online gaming. A ping of 20ms feels buttery smooth, while 150ms can make you feel like you're playing underwater. Throughput matters less unless you're livestreaming—then both become important.

Video Streaming Services

Here's something interesting: when Netflix buffers, it's usually a throughput issue (your connection can't handle the data rate). But when there's a delay before playback starts? That's latency. I've seen households where one person gaming causes buffering issues for someone watching Netflix—classic throughput sharing problem!

Cloud Computing and Remote Work

Working remotely, I've noticed that latency affects my responsiveness in video calls and remote desktop sessions. Meanwhile, throughput determines how quickly I can upload large design files or download software updates. Both impact productivity, but in different ways.

How to Optimize Latency and Throughput

Based on my experience troubleshooting network issues, here are some practical tips:

Reducing Latency

- Choose closer server locations when possible

- Use Content Delivery Networks (CDNs)

- Optimize your network route using better DNS providers

- Consider using faster protocols like HTTP/3 and QUIC

- Reduce the number of network hops

Improving Throughput

- Upgrade to a higher bandwidth connection

- Use modern networking equipment that supports current standards

- Implement QoS (Quality of Service) to prioritize important traffic

- Consider using compression to reduce data size

- Optimize your application's data transfer patterns

Common Misconceptions and Pitfalls

I've encountered numerous misconceptions about these metrics over the years. One client once insisted that paying for more bandwidth would fix their slow database queries—it didn't, because their issue was latency, not throughput!

Another common mistake is confusing speed tests. Many online speed tests measure throughput (download/upload speeds) but neglect latency. For a complete picture, you need to check both metrics separately.

Monitoring and Measuring These Metrics

In my professional toolkit, I rely on various tools to monitor these metrics. For latency, tools like ping and traceroute are invaluable. For throughput, I use iperf or commercial network monitoring solutions.

Pro tip: Always measure under different conditions—peak hours vs. off-peak, different days of the week, and various weather conditions (yes, weather can affect satellite and wireless connections!).

The Future of Latency and Throughput

As we move towards 6G and beyond, we're seeing interesting developments. Edge computing is reducing latency for critical applications, while technologies like SpaceX's Starlink are attempting to provide both low latency and high throughput in previously underserved areas.

The challenge remains balancing these two metrics effectively. In my observations, the future likely holds solutions that dynamically prioritize either latency or throughput based on application needs—something like intelligent QoS at the user level.

Practical Use Cases

Here are some real scenarios where understanding the difference matters:

Stock Trading Applications

In financial markets, microseconds matter. Traders care more about latency than throughput—they need immediate execution of small orders rather than the ability to handle large data volumes quickly.

Enterprise Backup Systems

For large-scale data backups, throughput is king. Companies can tolerate some latency if they can move terabytes of data efficiently during off-peak hours.

Virtual Reality and AR Applications

Emerging VR applications demand ultra-low latency to prevent motion sickness, while also requiring sufficient throughput for high-resolution video streams. It's a delicate balancing act!

Frequently Asked Questions

No, high bandwidth (which increases throughput) doesn't directly fix latency issues. Latency is about delay time, while bandwidth is about capacity. It's like having a wider road doesn't necessarily make traffic lights change faster. You need to address the specific causes of latency like distance, network hops, or processing delays.

For most video games, latency is significantly more important than throughput. Gaming typically requires quick response times (low latency) rather than high data transfer rates. Most games use relatively little bandwidth, but they're very sensitive to delays. A 20ms connection will always feel better than a 200ms one for gaming, regardless of throughput.

If websites are slow to start loading or your clicks feel delayed, you're likely experiencing latency issues. If downloads or uploads are slower than expected, or videos buffer frequently, those are throughput problems. You can test latency using ping tests and measure throughput with speed tests. For comprehensive diagnostics, use tools that measure both metrics simultaneously.

Conclusion

Understanding the difference between latency and throughput has been fundamental to my work in network optimization. While latency measures delay and throughput measures capacity, both play crucial roles in determining user experience and system performance.

Remember, optimizing one doesn't automatically improve the other. Whether you're a gamer, remote worker, or network administrator, knowing which metric to focus on can save you time, money, and frustration. Keep in mind that in the real world, you often need to balance both metrics for optimal performance.

Next time you're troubleshooting network issues, ask yourself: is this a "how fast" problem (latency) or a "how much" problem (throughput)? The answer will guide your optimization strategy and help you make more informed decisions about your network infrastructure.